更新3.1后,使用新版kk命令添加节点,报错如下

master v1.20.4 [map[address:10.43.40.135 type:InternalIP] map[address:master type:Hostname]]

node1 v1.20.4 [map[address:10.43.40.136 type:InternalIP] map[address:node1 type:Hostname]]

node2 v1.20.4 [map[address:10.43.40.137 type:InternalIP] map[address:node2 type:Hostname]]

INFO[17:45:03 CST] Installing kube binaries

Push /home/ecarx/kubesphere/v3.1/kubekey/v1.20.4/amd64/kubeadm to 10.43.40.138:/tmp/kubekey/kubeadm Done

Push /home/ecarx/kubesphere/v3.1/kubekey/v1.20.4/amd64/kubelet to 10.43.40.138:/tmp/kubekey/kubelet Done

Push /home/ecarx/kubesphere/v3.1/kubekey/v1.20.4/amd64/kubectl to 10.43.40.138:/tmp/kubekey/kubectl Done

Push /home/ecarx/kubesphere/v3.1/kubekey/v1.20.4/amd64/helm to 10.43.40.138:/tmp/kubekey/helm Done

Push /home/ecarx/kubesphere/v3.1/kubekey/v1.20.4/amd64/cni-plugins-linux-amd64-v0.8.6.tgz to 10.43.40.138:/tmp/kubekey/cni-plugins-linux-amd64-v0.8.6.tgz Done

INFO[17:45:09 CST] Joining nodes to cluster

[node3 10.43.40.138] MSG:

[preflight] Running pre-flight checks

W0430 17:45:12.191451 9559 removeetcdmember.go:79] [reset] No kubeadm config, using etcd pod spec to get data directory

[reset] No etcd config found. Assuming external etcd

[reset] Please, manually reset etcd to prevent further issues

[reset] Stopping the kubelet service

[reset] Unmounting mounted directories in "/var/lib/kubelet"

W0430 17:45:12.194980 9559 cleanupnode.go:99] [reset] Failed to evaluate the "/var/lib/kubelet" directory. Skipping its unmount and cleanup: lstat /var/lib/kubelet: no such file or directory

[reset] Deleting contents of config directories: [/etc/kubernetes/manifests /etc/kubernetes/pki]

[reset] Deleting files: [/etc/kubernetes/admin.conf /etc/kubernetes/kubelet.conf /etc/kubernetes/bootstrap-kubelet.conf /etc/kubernetes/controller-manager.conf /etc/kubernetes/scheduler.conf]

[reset] Deleting contents of stateful directories: [/var/lib/dockershim /var/run/kubernetes /var/lib/cni]

The reset process does not clean CNI configuration. To do so, you must remove /etc/cni/net.d

The reset process does not reset or clean up iptables rules or IPVS tables.

If you wish to reset iptables, you must do so manually by using the "iptables" command.

If your cluster was setup to utilize IPVS, run ipvsadm --clear (or similar)

to reset your system's IPVS tables.

The reset process does not clean your kubeconfig files and you must remove them manually.

Please, check the contents of the $HOME/.kube/config file.

[node3 10.43.40.138] MSG:

[preflight] Running pre-flight checks

W0430 17:45:12.887514 9778 removeetcdmember.go:79] [reset] No kubeadm config, using etcd pod spec to get data directory

[reset] No etcd config found. Assuming external etcd

[reset] Please, manually reset etcd to prevent further issues

[reset] Stopping the kubelet service

[reset] Unmounting mounted directories in "/var/lib/kubelet"

W0430 17:45:12.891470 9778 cleanupnode.go:99] [reset] Failed to evaluate the "/var/lib/kubelet" directory. Skipping its unmount and cleanup: lstat /var/lib/kubelet: no such file or directory

[reset] Deleting contents of config directories: [/etc/kubernetes/manifests /etc/kubernetes/pki]

[reset] Deleting files: [/etc/kubernetes/admin.conf /etc/kubernetes/kubelet.conf /etc/kubernetes/bootstrap-kubelet.conf /etc/kubernetes/controller-manager.conf /etc/kubernetes/scheduler.conf]

[reset] Deleting contents of stateful directories: [/var/lib/dockershim /var/run/kubernetes /var/lib/cni]

The reset process does not clean CNI configuration. To do so, you must remove /etc/cni/net.d

The reset process does not reset or clean up iptables rules or IPVS tables.

If you wish to reset iptables, you must do so manually by using the "iptables" command.

If your cluster was setup to utilize IPVS, run ipvsadm --clear (or similar)

to reset your system's IPVS tables.

The reset process does not clean your kubeconfig files and you must remove them manually.

Please, check the contents of the $HOME/.kube/config file.

ERRO[17:45:13 CST] Failed to add worker to cluster: Failed to exec command: sudo env PATH=$PATH /bin/sh -c "/usr/local/bin/kubeadm join lb.kubesphere.local:6443 --token 4bk0fh.xyq5rzcjfq8aq8hx --discovery-token-ca-cert-hash sha256:c27daf7f404b0b425a6700cc94a57162dae7962456a52201e743a903bda2e1e8"

[preflight] Running pre-flight checks

[WARNING FileExisting-ebtables]: ebtables not found in system path

[WARNING FileExisting-ethtool]: ethtool not found in system path

[WARNING FileExisting-tc]: tc not found in system path

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.6. Latest validated version: 19.03

error execution phase preflight: [preflight] Some fatal errors occurred:

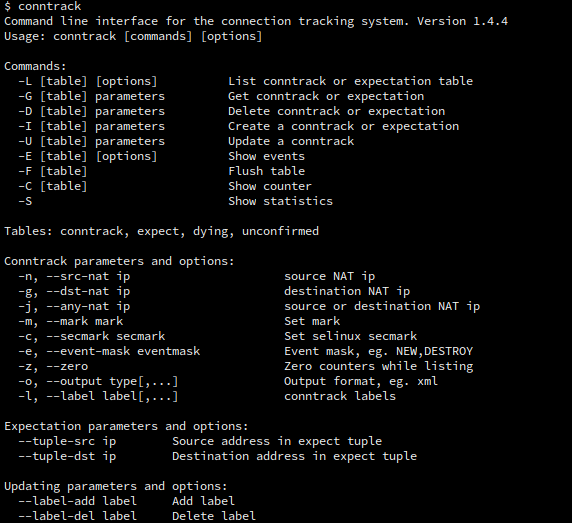

[ERROR FileExisting-conntrack]: conntrack not found in system path

[ERROR FileExisting-ip]: ip not found in system path

[ERROR FileExisting-iptables]: iptables not found in system path

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher: Process exited with status 1 node=10.43.40.138

WARN[17:45:13 CST] Task failed ...

WARN[17:45:13 CST] error: interrupted by error

Error: Failed to join node: interrupted by error

其中conntrack等工具是已经安装了的,docker也是kk安装的,但是这里提示版本不支持